- All Posts

- /

- Introducing New A/B Split Testing Workflows in Vero Cloud

Introducing New A/B Split Testing Workflows in Vero Cloud

News and Updates-

Nicole Kersh

Nicole Kersh

-

Updated:Posted:

On this page

The ability to run A/B tests in a workflow is a long standing request that we’ve had a basic version of in private beta for some time. Thank you to everyone who voted for this feature and to all the beta users who gave us feedback. We’ve now made the feature available to all our Vero Cloud users.

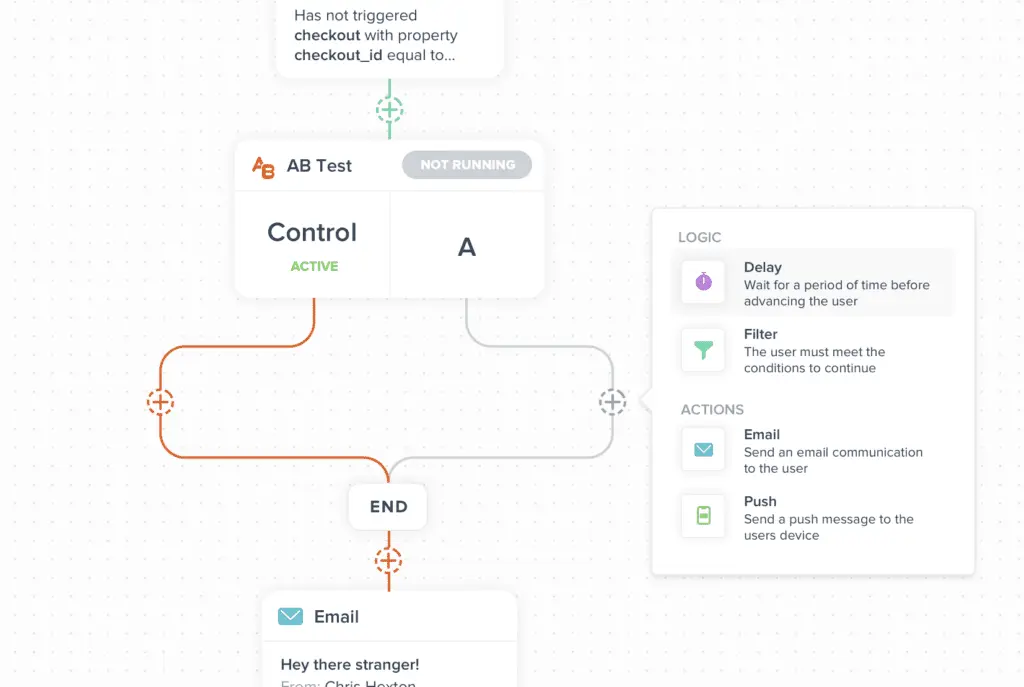

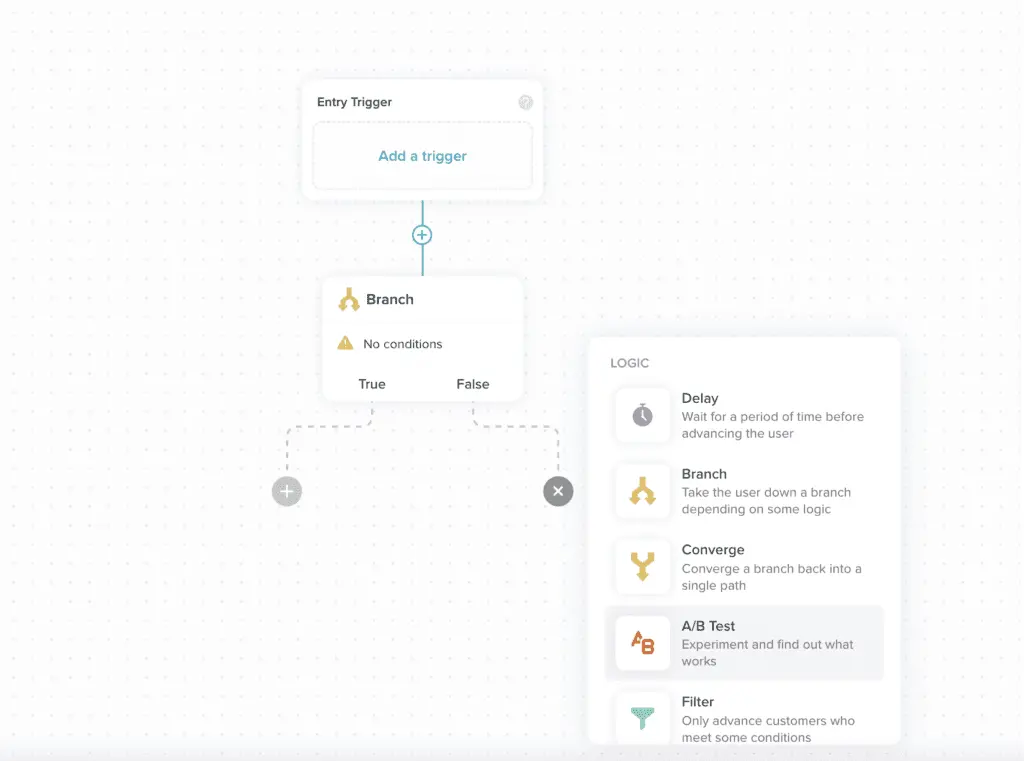

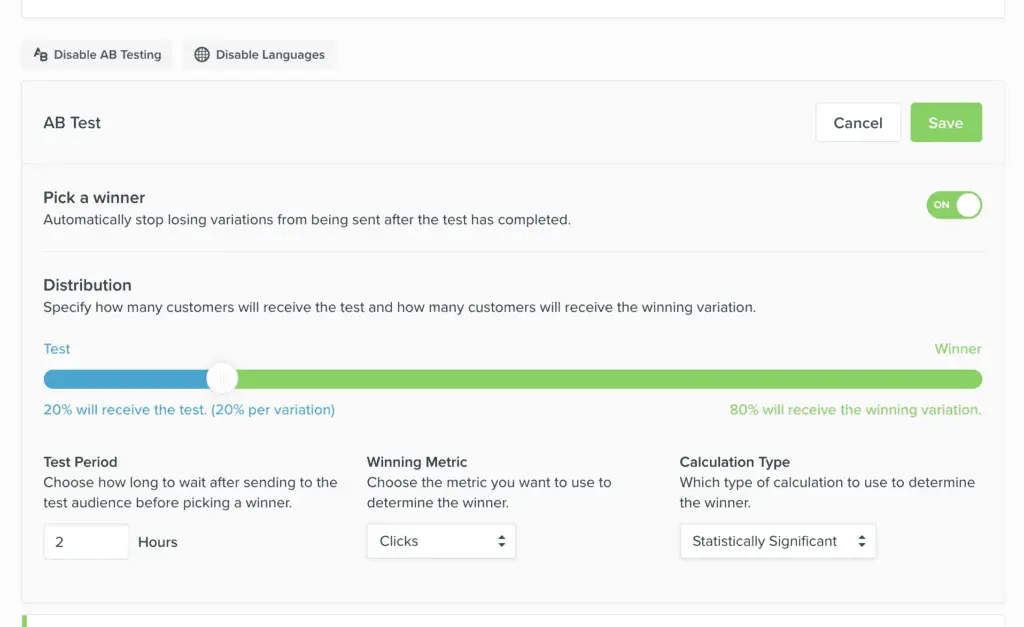

An A/B split test can have up to 5 variations, each variation creates a new branch that can contain multiple messages, delays or logic nodes. You can decide the percentage of customers that should receive each variation, set a notification and pick a winner when you finish the test. We think this feature is a simple way to run a quick test to gauge how parts of a workflow are performing, however we know it’s just the start of what could be possible with an A/B testing feature in Vero Cloud.

Check it out and if you have any feedback or thoughts on our first iteration of this feature, please visit feedback.getvero.com.

Getting Started with A/B Split Tests in Vero

Wondering how many times you should reach out to prospects, which headline works best, what offer converts or how long to wait between messages?

When it comes to customer messaging, it’s not always obvious what’s going to cut through and connect. Rather than basing your marketing decisions on intuition, A/B tests, also known as split tests, are a simple way to get the optimum result.

Think of A/B tests as mini-experiments. Starting with a hypothesis, you’ll split your audience, create variations of a campaign or workflow, and then use the data to determine whether version A or B performed better. A/B split tests take the guesswork by testing out different messages, strategies and content to see which makes the most impact.

Here’s a quick guide to taking full advantage of Workflow A/B Tests.

Less Guessing, More Goals

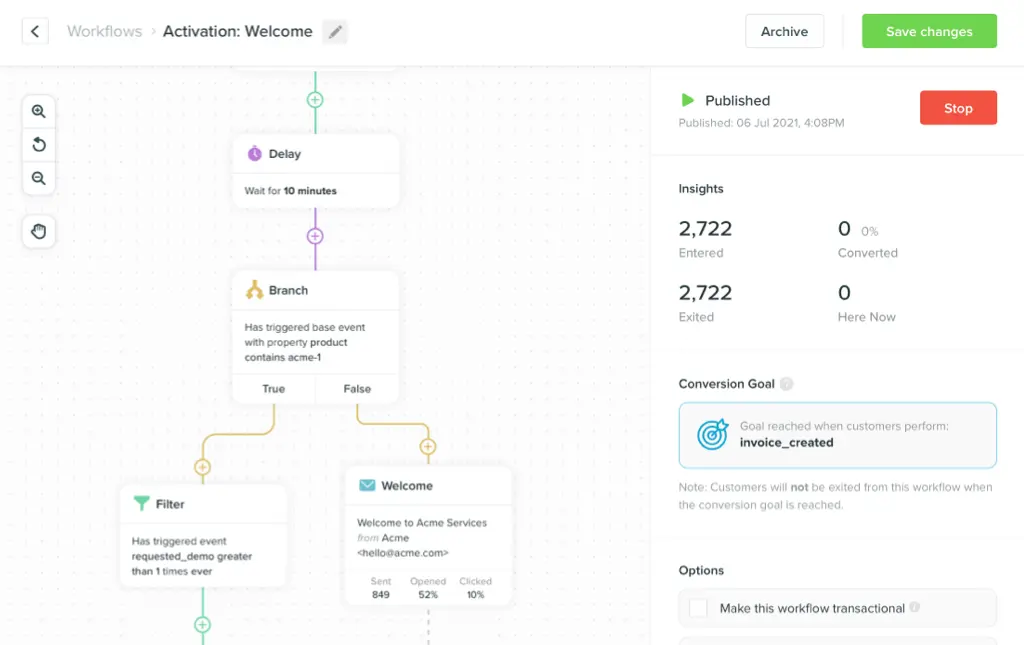

A/B testing with a conversion goal takes your test to the next level. Beyond comparing open and click results, you’re able to directly link your campaigns to actual business outcomes and identify which variation performed the best.

To run an effective A/B test, split your audience into two segments and create two different versions of a message or workflow; option A and option B. Then set a goal, so when the test has run its course, you’ve got a clear measure of success. For example, if your goal is to promote a new product, your measure would be revenue.

Generally speaking, there’s no one-goal-fits-all approach to A/B testing. Goals can be anything from email opens, click-throughs or clicking a button. These outcomes are a solid starting point:

- Drive site visits

- Optimise email engagement rates like open and click-through

- Increase revenue

- Decrease cart abandonment

A/B tests are designed to be short and snappy experiments. When testing your campaigns, keep it simple and limit the number of variables you’re testing in a single split test. Sticking to a handful of elements means you can link success to a given variable. Here are some of the variables you can test with Vero’s A/B workflows:

Content

Try out different subject lines, CTA’s, email copy, layouts and images to see what’s working.

Channels

Experiment with endpoints like email, push notifications or SMS to better understand where to best reach your audience.

Timing

Try sending messages at different times or different days to discover when your audience is most receptive.

Frequency

Too much? Not enough? Experiment with send cadence to find the sweet spot.

To make the most out of the test, you need a significant number of people in each variation to get solid data from a decent sample size.

Your A/B test is now live! To review split test results see the Results Modal to evaluate the metrics and declare a winner.

More resources to help you build your split tests:

- https://feedback.getvero.com/workflows/p/ab-testing-in-a-workflow

- https://help.getvero.com/articles/a-b-testing-email-campaigns

Keep up to date with Vero

Keep up with all of Vero’s new features, and our latest thinking by visiting our Resource Centre. Product updates like these come directly from customer feedback. Drop us a note or reach out via Slack.

We’re committed to building product-led, community-driven products, co-designed by the folks who use them. We’d love your thoughts on our direction, and product.